With all the previous discussion of paid reviews and my unwillingness to raise the white flag or bend over, this post is going to come as a bit of a shock.

With all the previous discussion of paid reviews and my unwillingness to raise the white flag or bend over, this post is going to come as a bit of a shock.

I am lowering the red flag

Carry on reading to find out why this isn’t the same as raising a white flag, and is far from surrendering to Google on paid reviews.

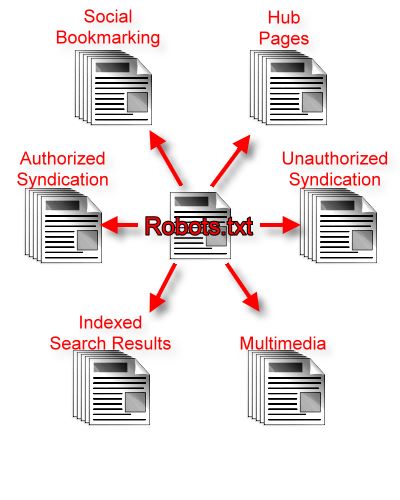

Robots.txt

I have spent a long time deciding on a course of action, and have decided that blocking my content using Robots.txt is ultimately better for me, and better for people hiring my services.

It also happens to be worse for Google than currently, but that is the beauty of this strategy.

It might be harder to rank, pages blocked using robots.txt still gather PageRank, and can appear in the index, though they would be looked on as dangling pages.

Ultimately links can always be redirected to a followup review which refers to the first, and that followup isn’t a paid review.

It is a little naughty, some people will sometimes receive editorial links within reviews and receive a trackback, but I don’t know of any spam plugin that checks robots.txt , plus the links will still be valuable in other search engines.

Google’s Achilles Heel With Paid Reviews

The only domain for which a client is paying for a review from is this one. When my content appears on other sites, there is a totally different editorial process, and links can in no way be looked on as paid links.

Content syndication is extensive:

1. SOCIAL BOOKMARKING

Sites such as BloggingZoom encourage more than just a single line of description and rewritten titles on submissions, and not only deliver traffic from their existing user base, but also search traffic.

2. HUB PAGES

Many content sites allow you to use syndicated content in the form of article feeds, and content is even picked up by larger sites such as Topix.

3. AUTHORIZED SYNDICATION

You can arrange or organise for your content to be selectively syndicated on authority sites such as Andy Beard on WebProNews and even my WordPress SEO reviews published on SearchNewz.

Whilst I haven’t made it clear recently, I publish all my content under GPL, in fact I am switching to the GFDL with an invarient clause requiring a live hyperlink back to the original without nofollow – I prefer GFDL over creative commons because of this flexibility (for me) to be highly specific.

In future I am going to be actively encouraging syndication

4. UNAUTHORIZED SYNDICATION

This is technically the same, but as long as people scraping my content are linking back to me, preferably with a followed link, it is great. I am not even worried about some light spinning of the content, as long as they state that the content has been modified and is only based on my original.

5. INDEXED SEARCH RESULTS & AGGREGATORS

This is the likes of Technorati, and feed readers that are indexed – I have no intention of blocking reviews from RSS feeds.

6. MULTIMEDIA

I use a lot of pictures and screenshots for my reviews, but this is going to increase – in addition I will also be creating podcasts and screencasts which will be widely distributed in their own right.

Hooray for Universal search!

No Nofollow = Editorial Backlinks

By not using nofollow in my reviews, it is most likely that syndicated copies of my reviews will provide backlinks not just for me, but also for my clients. The backlinks are editorial in many cases, someone has chosen to syndicate my content.

Unfortunately Google use backlinks to attribute content to an original source, but it is a whole lot harder if they can’t index the original. It will be interesting which site syndicating my work will rank highly, or how many.

Linking to Syndicated Content

This is something I haven’t decided on yet, but just like I can link through to my various social profiles, I do have the option to link through to my content on other domains after it has been syndicated.

Worse for Google

My content will still be in the index, filtered through an extra layer of editorial control, but there is going to be a whole lot more of it.

Google have made it clear that they are only worried about the existence of links, and not the time it takes to create content, expertise, and whether links within reviews were specified or given in an editorial capacity.

I honestly don’t like junk reviews written purely for SEO purposes, but as Google seem determined to impose the letter of the law rather than the spirit, throwing the baby out with the bath water, whilst I will comply to the letter of the law, I can’t see a reason why I shouldn’t sidestep the charging bull.

Nofollow is not the answer to Google’s troubles

Update

There seems to be some misunderstandings, and I need to clear them up.

1. The blocking hasn’t happened yet – it is the next thing on the todo list

2. I intend to get more search traffic from Google taking this action, not less.

Update 2

Robots.txt has now been modified

User-agent: *

Disallow: /Recommends/

Disallow: /downloads/User-agent: Googlebot

Disallow: /2007/08/plagiarism-checker-outsourcing.html

Disallow: /2007/07/gather-success-review.html

Disallow: /2007/06/wordpress-seo-masterclass-for-competitive-niches.html

Disallow: /2007/05/bidvertiser-review.html

Disallow: /2007/05/seo-consulting.html

Disallow: /2007/04/ibegin-source-review.html

Disallow: /2007/03/sponsored-reviews-now-live-in-depth-review.html

Disallow: /2007/03/volusion-review-and-suggestions.html

Disallow: /2006/12/search-engine-glossary.html

The list is quite short, but now I have a strategy in place, I will be writing a lot more paid reviews

Whilst this might be looked on as insignificant, some of those pages rank quite well for very useful terms, and are probably worth 2000+ visitors per month.

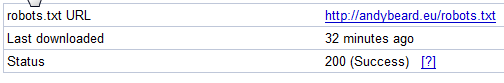

Update 3

Whilst the changes in robots.txt were quite straight forward, before making any reinclusion or reconsideration request, I thought it important to check the robots.txt within the Google webmaster console.

First of all I waited for it to be refreshed by Googlebot, which seems to happen approximately once every 24 hours.

There is an option to just copy and paste that refreshed data by hand, but waiting for it to be fetched is conclusive.

Next I entered in the URLs which need to be blocked by the robots.txt file, and checked them.

In theory Googlebot will now be blocked from crawling the “offending” pages, and I will be able to ask for reconsideration.

Photo credits

Lowering the Flag (modified)

Matador (modified)

Leave a Reply